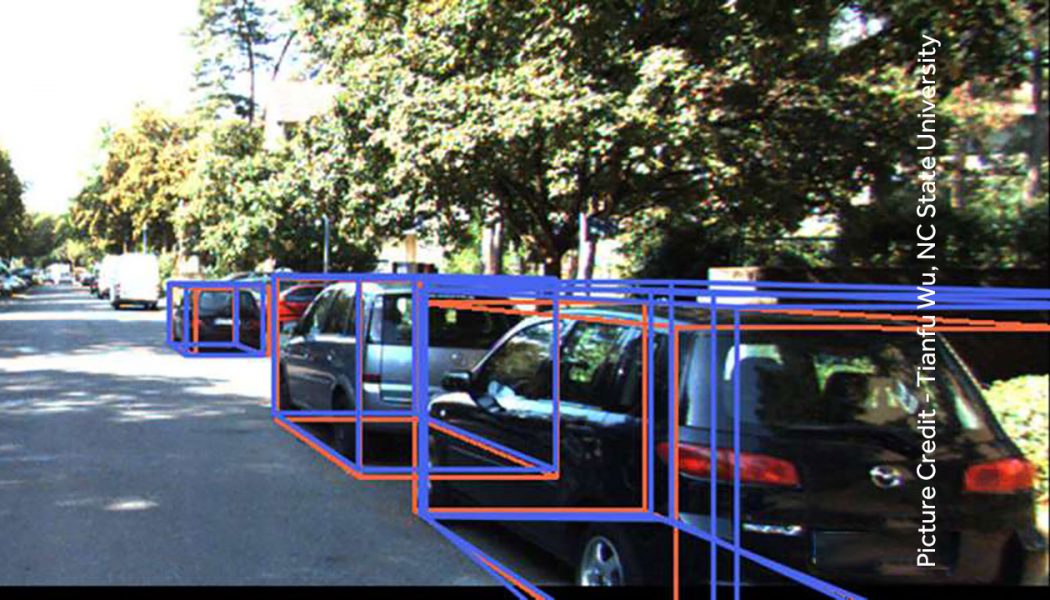

MonoCon is a new technique which improves the ability of Artificial Intelligence (AI) programs to identify 3D objects and how they relate to each other in space, using 2D images. Researchers at the North Carolina State University have developed the MonoCon which could find a potential use in Autonomous Vehicles in navigation using onboard Cameras.

Though we live in a 3D world, the pictures we take are generally in 2D. AI Programs receive Visual Input from Cameras. In order to interact with the world, AI should be able to interpret what 2D images can tell about 3D space. In the research, the team focused on a big challenge of AI getting accurately recognizing 3D objects like People, Vehicles from 2D images and placing them in space.

Applications

MonoCon technique, in addition to Autonomous Vehicles, can find application in Manufacturing and Robotics. Most of the existing systems in Autonomous Vehicles rely on LIDAR (Light Detection and Ranging) technology. It uses Lasers to measure distance and navigating in 3D space. LIDAR is expensive and hence the Autonomous Systems do not include much redundancy. But with MonoCon, images generated by Cameras, which are less expensive, multiple Cameras could be used and thus bringing redundancy.

Read more about the new development at TechXplore in an article published on 26th January 2022 by Matt Shipman, North Carolina State University.